What’s “Good” in Data Science?

In our November Scaletech event, Justin Norman, VP Data Science at Yelp, talked about how his team handles data science.

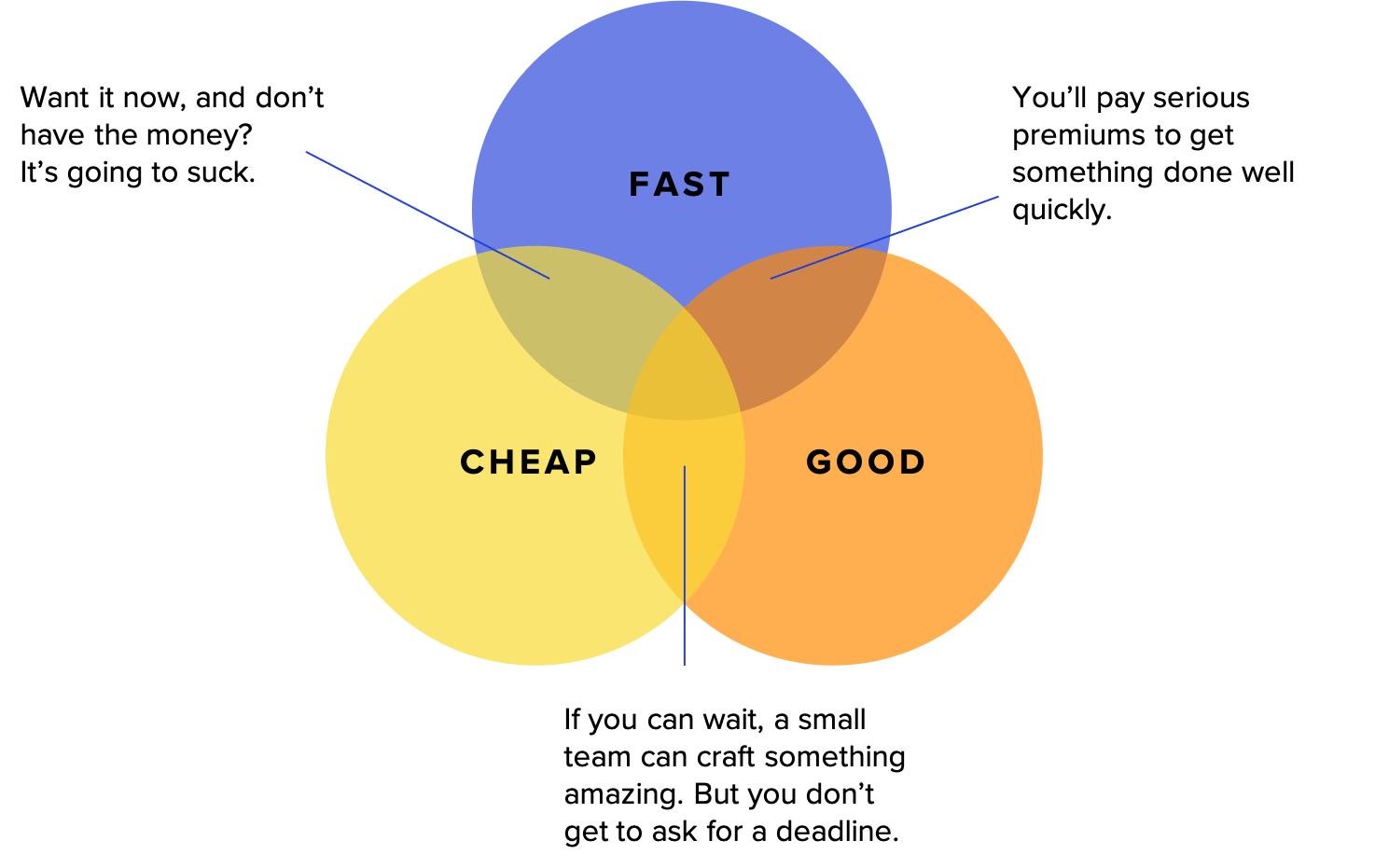

Justin recently wrote an article on bringing an AI product to market, where he mentioned the old truism about product delivery: Products can be delivered fast, cheap or good—and you have to pick only two.

But this doesn’t answer the obvious question: What’s good? Good depends on who you ask, and Justin described three stakeholders in AI products. I’m paraphrasing here, but there are three archetypes who care about AI products:

- The Operator. This person has to implement the product. They have to keep it running smoothly, with high uptime and few changes. The product has to adapt to changes in data and parameters, and new models shouldn’t cost a lot to train.

- The Scientist. This is the researcher, who wants to uncover new insights. They care about precision, accuracy and reproducibility. They seek to minimize uncertainty.

- The Product Owner. This is the businessperson, who wants to find market advantage. When they market the product, they need certainty: What are the benefits, what’s the ROI, will it definitely work for customers?

Understanding the (healthy) tension between these three stakeholders is key to delivering great AI-powered products. It defines the metrics for success, the trade-offs we’re willing to make, the testing we do and the cost and speed of the final product.

| Operator | Scientist | Product Owner | |

| Main focus | Is it implementable? | Is it correct? | Is it profitable? |

| Metrics that matter | Mean time between failure, drift, computing cost. | Confidence interval, P-value, reproducibility. | Return on investment (ROI), Total Cost of Ownership (TCO), predictability, consistency. |

| Perspective | The past: Will things continue as they were? | The present: Is it correct now? | The future: How will this improve the business? |

| Audience | Collaborators. | Academic peers. | Business owners. |

These three perspectives explain why the Netflix Prize winner, while technically better than competing, simpler solutions, was never implemented: It was too complicated. Healthy AI product management is a trade-off between business value, scientific correctness, and pragmatic manageability.

Certain factors give each of the three stakeholders priority.

- For AI products that have to scale, or where downtime would have a widespread impact on the business and its users, the Operator will have the upper hand.

- For products where the cost of false positives or false negatives are high, or the algorithms will be reviewed by regulators, the Scientist’s perspective will prevail. The research needs to be defensible and explainable.

- When the business is riding on a strategic feature, competitive pressures are high, or the sales cycle is too long, the Product Owner’s perspective will win out. The product needs to move the bottom line or the business will fail.

Managers and executives need to set the balance between these three competing viewpoints, communicate them clearly to teams, and follow up with the appropriate metrics for each perspective. Only then will we have an idea of what “good” means.

Read more like this

Addressing gaps in Canadian AI Maturity and Implementation

It has been a busy month in Canadian tech; from Toronto’s Tech…

Why Georgian Invested in Island (Again)

Island, the developer of the Enterprise Browser, emerged from stealth in early…

Why Georgian Invested in Render

We are pleased to announce Georgian’s investment in Render’s $80M Series C…